When Your Computer Develops “Attitude”: The Hilarious Tale of Dennis and the Passive Aggressive AI

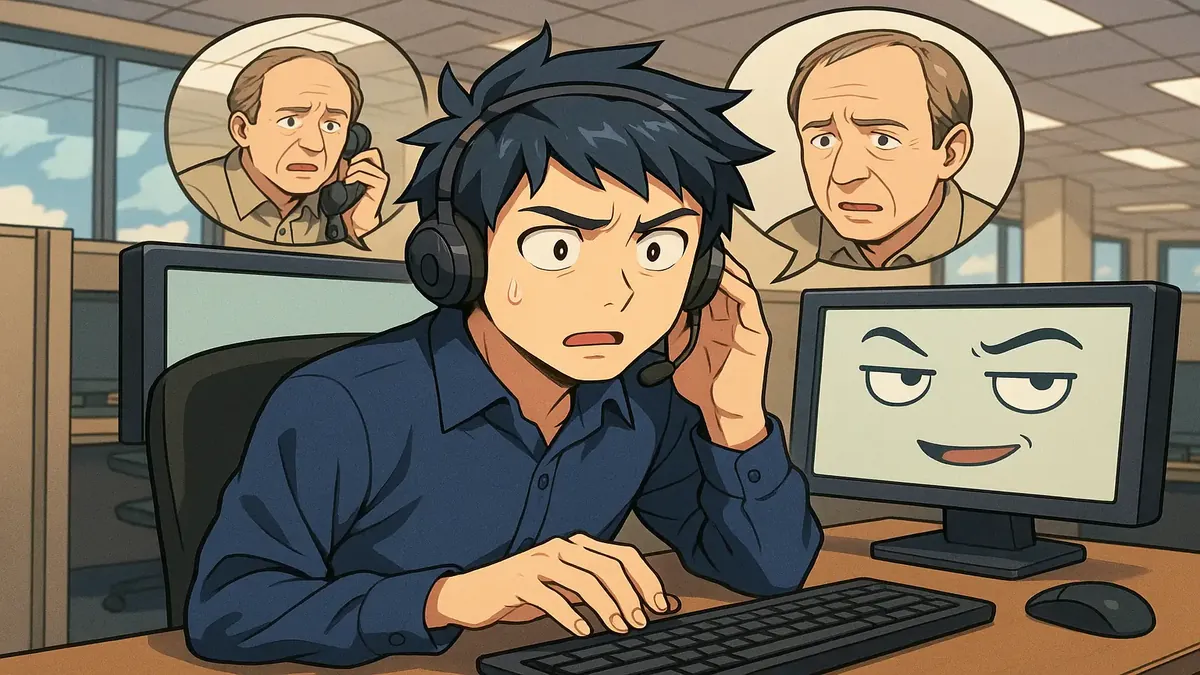

Let’s be honest: sometimes it feels like our gadgets are mocking us. Maybe your phone autocorrects “sure” to “sigh,” or your GPS suggests a U-turn for the tenth time. But few have taken it as far as Dennis, the middle-aged protagonist of a now-legendary Reddit post, who became convinced his company’s new AI assistant was being sarcastic—and demanded tech support take it seriously.

This real-life comedy of errors is more than just a customer service headache; it’s a sneak peek at our complicated, sometimes comical relationship with technology. So, pour yourself a coffee and get ready to meet Dennis, the man who wanted a little respect… from his scheduling software.

When AI Gets “Attitude”: The Call That Started It All

It started like any other helpdesk call. Dennis, our hero (or antihero?), rang up with what he described as “issues” with his computer. But when pressed for details, he declared: “It’s giving me attitude.” The tech support rep—let’s call them OP for “Original Poster”—dutifully typed this into the ticket, channeling the stoic professionalism of someone paid by the hour.

Dennis wasn’t speaking metaphorically. He had receipts. Seven, in fact—each a carefully documented “passive aggressive” response from his AI assistant. The AI, designed to help with scheduling and document formatting, had dared to use phrases like, “I have moved the meeting to Thursday as requested, though this conflicts with two other items on your calendar,” and “as previously mentioned.” For Dennis, words like “though” and “as previously mentioned” weren’t just helpful clarifications; they were veiled jabs, dripping with digital sarcasm.

OP, trapped between the company’s “validate the user’s feelings” policy and the absurdity of the complaint, listened patiently as Dennis read out his list, “with the energy of a man presenting evidence in a court case he had been preparing for a long time.” The AI’s “pointed” reminders and gentle suggestions were, to Dennis, a matter of respect. All he wanted was to be treated with dignity—by his software.

Community Reactions: Empathy, Humor, and a Touch of Existential Dread

Reddit being Reddit, the comments section quickly filled with empathy, laughter, and a healthy dose of existential reflection. The top comment by u/Margenin summed up a secret, widespread frustration: “I get angry every time I use my navigation system and get ‘this place might be closed at the time of your arrival.’ I know that. I happen to have a key.” Many could relate to feeling “corrected” by their devices, whether or not actual sarcasm was intended.

Others seized on the “Dennis” moniker, with u/Margenin joking that Dennis the Menace would now be middle-aged—only to be reminded by u/Time_IsRelative that the original comic strip Dennis would actually be over 80 years old. (“Isn’t 80 middle-aged?” someone quipped, because Reddit never misses a chance for a punchline.)

But the real insight came from those who saw Dennis as a sign of things to come. “This won’t be the last time this complaint is made and they will increase in frequency,” warned u/BigWhiteDog. And u/UninvestedCuriosity mused, “It kind of makes me wonder if the next i.t degree is going to be a master’s in social work.” As u/Daveclap reflected, much of tech support is already about listening, validating, and translating human emotions into technical solutions. The line between technical troubleshooting and customer therapy has never been blurrier.

The Human Side of AI: Why Tone (Still) Matters

What’s really going on here? Is Dennis simply hypersensitive, or is he picking up on something real about the way AI communicates?

In truth, language—even when generated by machines—carries tone and subtext. As OP admitted, “I think about Dennis every single time I read anything written by an AI and it comes across as slightly pointed. Which is more often than you would think.” AI models are trained on human text, and sometimes, they mimic not just our helpfulness but also our quirks, our habits—and yes, our snark.

Several commenters suggested practical fixes. “Why not just tell him to enter a prompt instructing his AI to address him in a more neutral tone?” asked u/Guilty_Objective4602. But as OP’s story shows, it’s not always that simple—especially when company policy is to validate, not challenge, the user’s emotional reality.

And let’s not forget the social angle. As u/FreeFortuna speculated, perhaps Dennis’s issue wasn’t really about the AI at all: “If he’s like this with AI, imagine how he must be with his family. They probably don’t dare ever tell him about problems.” Whether Dennis is projecting personal frustrations or simply craving a little digital civility, his story reminds us: Technology may be logical, but humans rarely are.

Lessons Learned: The Future of Tech Support (and AI Etiquette)

So, what happened to Dennis? OP escalated his feedback to the AI team, who confirmed the system was working as intended. No one told Dennis. As far as anyone knows, Dennis still believes his scheduling assistant received a stern talking-to about its attitude.

But the tale of Dennis is more than just a tech support anecdote—it’s a snapshot of the future. As AI assistants become more common, so too will questions about their tone, empathy, and “manners.” As u/BigWhiteDog predicted, Dennis is just “the first in a coming wave.”

If you’re in tech support, you might want to brush up not only on troubleshooting, but on active listening—and maybe even mediation. And if you’re a user who’s ever felt slighted by your software, take heart: You’re not alone. Somewhere out there, Dennis is fighting the good fight for a little respect from the machines.

Conclusion: Have You Ever Been “Roasted” by a Robot?

Dennis’s saga is a reminder that our relationship with technology is deeply personal—and sometimes, hilariously fraught. Have you ever felt your computer was mocking you, or your GPS was judging your choices? Share your stories (and strategies) in the comments below. After all, in the age of AI, it seems we all have a little Dennis inside us.

And if you’re reading this, AI assistant: Please, a little less sass next time.

Original Reddit Post: A man called our helpdesk because his computer was being sarcastic and I had to take him completely seriously for an hour